Advanced OpenClaw Routing: Directing Prompts to Different LLMs

Advanced OpenClaw Routing: Directing Prompts to Different LLMs

The world of AI agents is no longer about finding one perfect model. It's about building a smart system that knows which tool to use for which job. OpenClaw, a powerful and flexible AI agent framework, excels at this through advanced routing. This guide will take you deep into the mechanics of directing your prompts to the most suitable Large Language Model (LLM), whether it's a high-powered cloud model, a cost-effective local instance, or a specialized AI for a specific task.

What Is Advanced OpenClaw Routing and Why Does It Matter?

At its simplest, routing is the process of deciding which LLM should handle a given prompt. Instead of sending every request to a single model like GPT-4, OpenClaw can analyze the request and send it to the best available option. This matters for three critical reasons: capability, cost, and privacy.

For example, a complex coding problem might be best sent to a model fine-tuned for programming, while a simple summarization task could go to a cheaper, faster model. Sensitive data might be routed to a local LLM running on your own hardware, keeping it off external servers. This intelligent distribution of work is what transforms a basic AI agent into a highly efficient, adaptable system. The core benefit is creating a resilient and optimized AI workflow that adapts to your specific needs and constraints.

Direct Answer (40-60 words): Advanced OpenClaw routing is a configuration system that intelligently directs user prompts to different LLMs based on predefined rules, such as prompt content, cost, privacy requirements, or model specialization. This approach maximizes efficiency, reduces expenses, and enhances security by leveraging the unique strengths of various AI models within a single, cohesive agent framework.

Core Concepts: Understanding LLM Routing Strategies

Before diving into configuration, it's essential to understand the different routing strategies available. These strategies form the logic layer of your OpenClaw setup. The most common approaches include:

- Rule-Based Routing: The simplest method. You define explicit rules, such as "if the prompt contains the word 'code', send it to Model A" or "always use Model B for tasks under 100 tokens."

- Cost-Based Routing: This strategy prioritizes budget. It sends simple, non-critical tasks to cheaper models (e.g., GPT-3.5) and reserves expensive, powerful models (e.g., GPT-4) for complex requests. This is crucial for managing API costs at scale.

- Capability-Based Routing: Here, the system routes prompts based on the perceived task. A prompt asking for creative writing might go to a model known for narrative flair, while a data analysis request goes to a model with stronger reasoning capabilities.

- Fallback Chains: This is a resilience strategy. You configure a primary model, and if it fails (due to rate limits, errors, or timeouts), the system automatically tries a secondary or tertiary model. This ensures high availability.

Understanding these strategies allows you to design a routing system that aligns with your goals, whether they are financial, operational, or technical. The choice of strategy directly impacts the performance and reliability of your OpenClaw agent.

Step-by-Step Setup: Configuring OpenClaw for Multi-LLM Routing

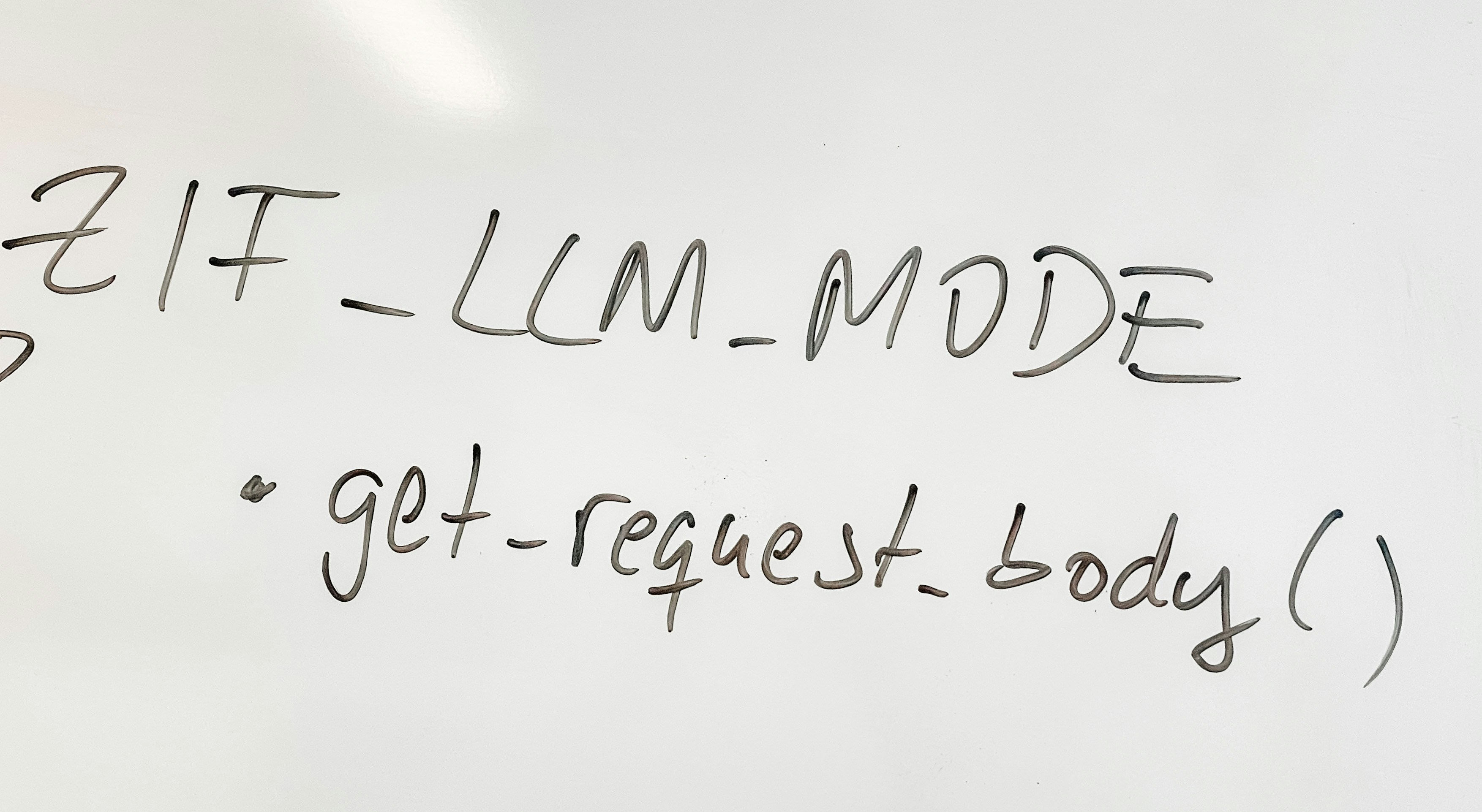

Setting up routing in OpenClaw involves editing its configuration file, typically a YAML or JSON document. This file defines the "router" component, which contains the rules and model endpoints. While the exact syntax may vary, the conceptual steps are consistent.

First, you need to define each LLM endpoint. This includes the API provider (e.g., OpenAI, Anthropic, or a local Ollama server), the model name, and any required authentication keys. For instance, you would list both gpt-4 and claude-3-opus as separate endpoints with their respective API credentials.

Next, you create the routing rules. A rule might look like this: if prompt_length < 50 and task_type == "summarization", use model "gpt-3.5-turbo". You can chain multiple rules, and OpenClaw will evaluate them in order. The first matching rule determines the routing destination.

Finally, you test the configuration. Start with simple prompts to verify that the routing logic works as expected. For example, send a short, simple query and confirm it goes to the cheaper model. Then, send a complex coding problem and verify it routes to the more capable model. This iterative testing is key to a reliable setup. A well-structured configuration is the foundation of effective routing, much like the principles discussed in guides for building robust AI pipelines, such as the build-voice-to-text-pipeline-openclaw tutorial.

Routing to Local LLMs: Privacy-First AI with Ollama Integration

One of the most powerful applications of OpenClaw routing is directing prompts to local LLMs, which significantly enhances privacy and control. Tools like Ollama allow you to run models like Llama 2 or Mistral directly on your own hardware. OpenClaw can be configured to route sensitive prompts—such as those containing personal data, proprietary code, or confidential business information—to these local models instead of sending them to cloud providers.

The setup involves adding your local Ollama server as an endpoint in the OpenClaw configuration. You'll specify the local URL (e.g., http://localhost:11434) and the model name. Then, you create a routing rule that identifies sensitive content. For example, you could route any prompt containing keywords like "internal," "confidential," or "private" to the local LLM.

This approach offers a clear trade-off: local models may be slower or less powerful than top-tier cloud models, but they provide unmatched privacy. For many applications, this is an acceptable compromise. It's a core tenet of responsible AI use, and understanding the privacy implications of your model choices is fundamental. For a deeper dive into this topic, the guide on local-llms-ollama-openclaw-privacy provides essential context.

Cost Optimization: Smart Routing to Minimize API Expenses

API costs can spiral quickly if you're using premium models for every task. Advanced routing is your most effective tool for cost management. By implementing a cost-based routing strategy, you can ensure that only the most demanding requests incur higher expenses.

Consider a workflow that involves both simple information retrieval and complex data synthesis. You can configure OpenClaw to route the simple queries to a cost-effective model like GPT-3.5-Turbo or a local model, while reserving GPT-4 for the complex synthesis tasks. Over thousands of interactions, this can lead to substantial savings.

To implement this, you need to estimate the cost per token for each model and set thresholds in your routing rules. For instance, you might set a rule: if estimated_cost > $0.05, use model "gpt-4"; else use model "gpt-3.5-turbo". This requires the router to have a basic understanding of prompt complexity, which can be approximated by token count or keyword analysis. The performance impact of this strategy is minimal, as the routing decision is made before the LLM is called, but the financial benefits are significant.

Advanced Routing: Fallback Chains and Content-Based Routing

For production-grade reliability, you need advanced routing techniques like fallback chains and content-based routing. A fallback chain ensures that if your primary model fails, the prompt is automatically sent to a backup model. This is crucial for maintaining uptime, especially when relying on third-party APIs that can experience outages or rate limits.

Configuring a fallback chain in OpenClaw typically involves defining a list of models in order of preference. The system will attempt the first model, and upon receiving an error (like a 5xx HTTP status or a rate limit message), it will automatically try the next model in the list. This creates a resilient system that can gracefully handle failures.

Content-based routing goes a step further by analyzing the prompt's semantic meaning to decide the best model. While more complex to implement, it can be highly effective. For example, you could use a lightweight local model to classify the prompt's intent (e.g., "coding," "creative writing," "general Q&A") and then route based on that classification. This allows for highly specialized routing without manual rule creation for every possible prompt type. This level of sophistication is what separates basic agents from truly advanced ones. The architectural principles that enable such flexibility are similar to those that make OpenClaw a superior choice compared to other agents, as explored in the comparison of openclaw-vs-autogpt-best-ai-agent.

Integrating Decentralized Inputs: Routing from Matrix and Nostr

OpenClaw's strength isn't limited to traditional chat interfaces. It can ingest prompts from decentralized communication channels like Matrix and Nostr, and routing becomes essential here. Different channels might have different contexts or security requirements, making it necessary to direct their inputs to appropriate LLMs.

For instance, a prompt coming from a private, encrypted Matrix room might contain sensitive internal data and should be routed to a local LLM. In contrast, a public query from a Nostr relay might be a general knowledge question suitable for a public cloud model. OpenClaw can be configured to listen on multiple channels and apply routing rules based on the source of the prompt.

This integration requires understanding the specific connectors for these protocols. The routing logic then incorporates the source channel as a variable in the decision-making process. This creates a powerful, decentralized AI system where prompts from various origins are processed intelligently and securely. Setting up such a system is detailed in resources about openclaw-decentralized-channels-matrix-nostr.

Real-World Use Cases: From Voice Pipelines to Productivity

The true value of advanced routing is realized in practical applications. Let's explore two common use cases.

Voice-to-Text Processing Pipeline: Imagine a voice assistant built with OpenClaw. When a user speaks a command, the audio is transcribed to text. This text prompt can then be routed. A simple command like "set a timer" might be handled by a fast, local model. A complex request like "analyze the sentiment of this meeting recording and draft a summary" would be routed to a powerful cloud model with strong analytical capabilities. This mirrors the architecture of a build-voice-to-text-pipeline-openclaw system, where routing adds a layer of intelligence.

Everyday Productivity Automation: For personal productivity, routing can optimize task handling. A prompt to "draft an email to my team about the project update" could be routed to a model good at formal writing. A prompt to "generate a list of creative blog post ideas" might go to a more imaginative model. By studying effective prompt structures, as seen in guides for best-openclaw-prompts-everyday-productivity, you can fine-tune your routing rules to match the task's nature, ensuring high-quality outputs every time.

Troubleshooting and Best Practices for Reliable Routing

Even well-configured routing systems can encounter issues. Common problems include misconfigured rules that send prompts to the wrong model, API authentication failures, or latency spikes. A systematic approach to troubleshooting is key.

Start by checking the OpenClaw logs. They will show which routing rule was triggered and which model was selected. If a prompt is routed incorrectly, review your rule conditions for logical errors. For API failures, verify that your keys are correct and that you haven't exceeded rate limits. For latency issues, consider that routing adds a small overhead; ensure your network and the chosen model's response time are within acceptable limits.

Best Practices:

- Keep Rules Simple: Start with clear, broad rules and refine them gradually.

- Test Extensively: Use a variety of prompt types to validate your routing logic.

- Monitor Costs: Regularly review your API usage to ensure your cost-based routing is effective.

- Plan for Failures: Always have a fallback chain, even if it's just a different cloud provider.

- Document Your Configuration: Maintain clear documentation of your routing rules for future maintenance and updates.

OpenClaw Routing vs. Other AI Agents: A Comparative Look

How does OpenClaw's routing capability compare to other AI agents? Many agents, like basic scripts or simpler frameworks, lack built-in, flexible routing. They often connect to a single LLM, limiting their adaptability. More advanced agents might offer some routing, but OpenClaw's strength lies in its configurability and ecosystem integration.

OpenClaw's routing is not a bolt-on feature; it's a core part of its architecture, designed from the ground up to handle multiple models and sources. This allows for the sophisticated setups described in this guide, like integrating with decentralized channels or managing complex fallback chains. While an agent like AutoGPT focuses on autonomous task execution, OpenClaw provides the fine-grained control over how and where those tasks are processed. This makes it particularly suited for users who need to balance performance, cost, and privacy in a customized way. The choice ultimately depends on whether you need a general-purpose autonomous agent or a highly configurable routing engine for your AI workflows.

Conclusion

Advanced OpenClaw routing transforms your AI agent from a single-tool utility into a versatile, intelligent system. By strategically directing prompts to the most suitable LLMs—whether based on cost, capability, privacy, or resilience—you can build workflows that are more efficient, cost-effective, and secure. The setup requires careful configuration and testing, but the payoff is a robust AI system that adapts to your needs. As the AI landscape continues to evolve, the ability to orchestrate multiple models will become increasingly valuable, and OpenClaw provides a powerful framework to master this skill.

FAQ

1. What is the main advantage of using OpenClaw routing? The main advantage is optimizing your AI workflow by matching each prompt to the best-suited LLM, which improves output quality, reduces costs, and enhances privacy and reliability.

2. Can I use OpenClaw routing with free or local LLMs? Yes, OpenClaw can route prompts to local LLMs like those run by Ollama, which are free to use (after hardware costs) and keep your data private.

3. How complex is it to set up routing rules? The complexity depends on your needs. Basic rule-based routing is straightforward to configure, while advanced strategies like content-based routing require more setup and potentially external classification tools.

4. Does routing add latency to my requests? There is a minimal overhead for the routing decision itself, but the overall latency is primarily determined by the chosen LLM's response time. A well-designed routing system can actually reduce latency by avoiding overloaded models.

5. What happens if my primary LLM fails? With a fallback chain configured, OpenClaw will automatically try your secondary or tertiary model, ensuring the request is still processed without manual intervention.

6. Is it possible to route prompts based on the user? Yes, advanced configurations can include user identity as a routing factor, allowing for personalized model selection (e.g., routing a developer's prompts to a code-specialized model).

7. How do I monitor the performance of my routing system? OpenClaw typically provides logs that show which model was used for each request. You can also track API usage and costs through your provider's dashboard to validate your cost-saving rules.